Artificial intelligence has rapidly moved from being a futuristic concept to an everyday business tool. From writing content and answering customer queries to assisting with coding and research, AI-powered systems are transforming how we interact with technology. At the center of this transformation are Large Language Models (LLMs).

If you have used tools like ChatGPT, you’ve already experienced the power of LLMs. These systems can understand questions, generate human-like responses, summarize information, and even assist with decision-making. But how do they actually work? And why are newer concepts like Retrieval-Augmented Generation (RAG) becoming important?

This guide explains Large Language Models, how ChatGPT works, and what Retrieval-Augmented Generation means — in a simple, practical way.

What Are Large Language Models (LLMs)?

Large Language Models are advanced artificial intelligence systems trained on massive amounts of text data. They learn patterns in language, including grammar, context, meaning, and relationships between words.

Unlike traditional software that follows predefined rules, LLMs generate responses dynamically based on what they have learned during training. This allows them to:

- Answer questions

- Write articles and emails

- Summarize documents

- Translate languages

- Generate code

- Provide recommendations

The term “large” refers to both the size of the dataset and the number of parameters used in the model. These parameters help the model understand context and predict the most relevant response.

In simple terms, LLMs do not memorize answers. Instead, they predict what should come next in a sequence of words based on patterns learned from vast amounts of text.

How LLMs Understand Language

To understand how LLMs work, it helps to break the process into simple steps:

1. Training on Massive Data

LLMs are trained on large datasets that include books, articles, websites, and other publicly available text. This training helps the model learn language structure, tone, and context.

2. Tokenization

Before processing text, the model converts words into smaller units called tokens. These tokens can represent words, parts of words, or characters.

For example:

“AI is transforming marketing” → [“AI”, “is”, “transforming”, “marketing”]

3. Pattern Recognition

The model learns relationships between tokens. It understands how words are commonly used together and how sentences are structured.

4. Prediction

When you type a prompt, the model predicts the most likely next word based on context. It repeats this process multiple times to generate full sentences.

This prediction-based approach is why LLMs can produce natural-sounding responses.

How ChatGPT Works

ChatGPT is powered by a Large Language Model designed for conversational interaction. It is optimized to understand prompts and generate helpful, structured responses.

Here is a simplified explanation of how ChatGPT works:

Step 1: User Input

You type a question or prompt such as:

“Explain SEO in simple terms.”

Step 2: Understanding Context

The model analyzes the words and determines intent. It identifies keywords like “explain” and “SEO” to understand what type of response is required.

Step 3: Processing with Neural Networks

The model processes the input using a transformer architecture. This architecture allows it to consider the relationship between words in context.

Step 4: Response Generation

The model generates text token by token, predicting the most relevant response.

Step 5: Output Delivery

The generated text is displayed as the final response.

The entire process happens within seconds, which is why ChatGPT feels interactive and conversational.

Limitations of Traditional LLMs

Although LLMs are powerful, they have certain limitations:

1. Knowledge Cutoff

LLMs are trained on data available up to a specific time. They may not know recent events unless connected to external data.

2. Hallucinations

Sometimes LLMs generate incorrect information confidently. This happens because they rely on patterns rather than real-time verification.

3. Lack of Source Attribution

Traditional LLMs often provide answers without citing specific sources.

4. Static Knowledge

They do not automatically update themselves with new information.

These limitations led to the development of Retrieval-Augmented Generation (RAG).

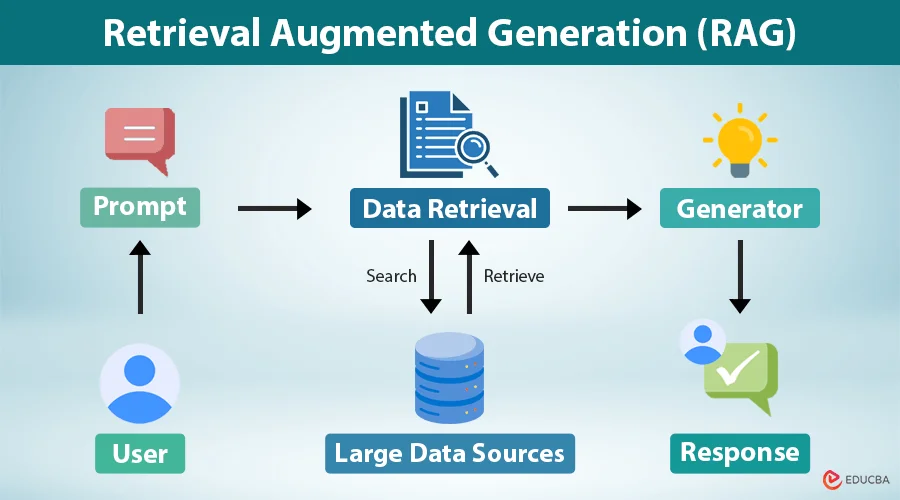

What is Retrieval-Augmented Generation (RAG)?

Retrieval-Augmented Generation (RAG) is a technique that enhances Large Language Models by connecting them to external data sources. Instead of relying only on training data, the model retrieves relevant information before generating a response.

In simple terms, RAG combines:

- Information retrieval

- Language generation

This approach improves accuracy, relevance, and trustworthiness.

How Retrieval-Augmented Generation Works

RAG works in three main steps:

Step 1: Query Understanding

The system receives a user query and analyzes the intent.

Example:

“What are the benefits of AI in SEO?”

Step 2: Retrieval

The system searches a database, documents, or knowledge base for relevant information.

This could include:

- Internal company data

- Website content

- Research documents

- Product information

- Knowledge bases

Step 3: Augmented Generation

The retrieved information is passed to the LLM, which then generates a response based on both:

- Retrieved data

- Pre-trained knowledge

This results in more accurate and context-aware answers.

Difference Between LLM and RAG

Large Language Models generate responses based only on training data. RAG enhances this by adding real-time or external information.

LLM:

- Uses pre-trained knowledge

- May produce outdated responses

- No direct access to new data

RAG:

- Retrieves relevant information first

- Generates more accurate responses

- Can use real-time or internal data

This is why many enterprises AI systems now use RAG.

Why RAG is Important

Retrieval-Augmented Generation solves many challenges associated with traditional LLMs.

Improves Accuracy

By retrieving relevant documents, the system reduces hallucinations.

Enables Real-Time Knowledge

RAG can access updated data sources.

Better Contextual Responses

Responses are tailored using specific information.

Source-Based Answers

Some implementations also provide citations.

Enterprise Use Cases

Companies can connect LLMs to internal knowledge bases.

Real-World Use Cases of RAG

Customer Support Automation

AI can retrieve information from help documentation before answering customers.

Enterprise Knowledge Assistants

Employees can ask questions and get answers from internal documents.

SEO and Content Research

AI can retrieve website data and generate optimized content.

E-commerce Product Assistance

AI can pull product specs and generate recommendations.

Healthcare Knowledge Systems

Doctors can query medical databases with AI support.

LLM vs RAG vs Fine-Tuning

These three approaches are often confused.

LLM Only

Uses base model knowledge.

Fine-Tuning

Model is retrained on specific datasets.

RAG

Retrieves relevant information dynamically.

RAG is often preferred because:

- No need to retrain models

- Easy to update data

- Cost-effective

- More flexible

How RAG Improves ChatGPT-like Systems

When RAG is integrated into conversational AI:

- Answers become more reliable

- Responses are domain-specific

- Data remains up-to-date

- Hallucinations reduce significantly

This is why many AI-powered search engines and enterprise tools are moving toward RAG-based architectures.

Architecture of a RAG System

A typical RAG system includes:

- Data source (documents, website content, PDFs)

- Embedding model (converts text to vectors)

- Vector database (stores embeddings)

- Retriever (finds relevant information)

- LLM generator (creates final response)

This pipeline allows the system to retrieve and generate information efficiently.

Benefits of RAG for Businesses

Businesses adopting RAG-based AI gain:

- More accurate AI responses

- Reduced misinformation risk

- Better customer experience

- Faster knowledge access

- Improved automation

- Scalable AI systems

RAG is particularly useful for:

- SaaS companies

- Marketing agencies

- E-commerce platforms

- Healthcare organizations

- Educational platforms

Challenges of RAG

While powerful, RAG also has challenges:

- Requires well-structured data

- Retrieval quality impacts results

- Infrastructure complexity

- Vector database management

- Latency considerations

However, these challenges are manageable with proper implementation.

Future of LLMs and RAG

The combination of LLMs and Retrieval-Augmented Generation is shaping the future of AI. We are moving toward systems that are:

- More accurate

- More transparent

- Context-aware

- Personalized

- Real-time enabled

AI search engines, enterprise assistants, and automation tools are increasingly using RAG to deliver better responses.

As AI adoption grows, understanding LLMs and RAG will become essential for marketers, developers, and business leaders.

Conclusion

As AI continues to evolve, Large Language Models and Retrieval-Augmented Generation are reshaping how search engines, businesses, and users interact with information. Understanding how ChatGPT works and how RAG improves accuracy is essential for organizations that want to stay competitive in AI-driven search.

For businesses, this shift also means adapting SEO strategies, improving structured content, and optimizing for AI-generated search experiences. Companies that align their digital presence with AI search behavior will gain better visibility, improved engagement, and stronger conversions.

This is where Matrix Bricks can help. With expertise in digital marketing, SEO, and digital transformation services, Matrix Bricks helps businesses optimize their content for AI-driven search engines, implement advanced SEO strategies, and build scalable digital growth frameworks. From AI SEO optimization to enterprise-level digital transformation, their solutions are designed to improve search visibility and future-proof your online presence.

By leveraging AI-powered strategies and modern SEO practices, businesses can stay ahead in the evolving landscape of Large Language Models and Retrieval-Augmented Generation.

Frequently Asked Questions

What are Large Language Models (LLMs)?

Large Language Models are AI systems trained on massive datasets to understand context and generate human-like responses. They power tools like ChatGPT, AI assistants, and AI search engines.

How does ChatGPT work?

ChatGPT uses a transformer-based Large Language Model to analyze user prompts, understand intent, and generate responses by predicting relevant words based on context.

What is Retrieval-Augmented Generation (RAG)?

Retrieval-Augmented Generation is an AI technique that retrieves relevant information from external data sources before generating responses, improving accuracy and reliability.

What is the difference between LLM and RAG?

LLMs rely only on training data, while RAG retrieves updated information before generating responses, reducing hallucinations and improving accuracy.

Why is RAG important for AI search?

RAG improves AI search by providing context-aware, accurate, and up-to-date answers, which enhances user trust and search experience.

How do LLMs impact SEO?

LLMs change how search engines generate answers, making content quality, authority, and structured information more important for AI-driven search visibility.

Can businesses use RAG for customer support?

Yes, businesses can integrate RAG with internal knowledge bases to provide accurate and real-time AI-powered customer support.